Safer continues to innovate, providing new ways for digital platforms to moderate their online spaces. With purpose-built solutions for detecting child sexual abuse material (CSAM) and exploitation (CSE), Safer empowers technology companies to confront these online harms. In 2025, more tech companies than ever used Safer.

Now in its seventh year, Safer has continued evolving to address young people's changing behaviors and the online threats they face. To empower a full range of customers across multiple industries, Thorn incorporated new capabilities allowing Safer to detect a wider variety of child sexual exploitation.

New in 2025

Hive API Integration

To further extend the impact of our detection solutions, we’ve partnered with Hive to integrate Safer into Hive’s cloud-based, enterprise-grade APIs. In the first full year of partnership, Safer and Hive helped technology companies deliver safer digital environments.

In 2025, 27 platforms used Safer’s integration through Hive to disrupt the spread of CSAM and CSE:

- 4,293,015,961 files processed for known and novel CSAM detection

- 11,674 lines of text processed for detection of potential exploitation

Safer Predict Grooming Label

Expanding the available Safer Predict labels to include “grooming” was a major step forward in text-based abuse and exploitation detection. This new feature classifies grooming behavior and language that can indicate potential early-stage sexual exploitation or abuse of a minor.

Safer Predict Spanish Language Support

To enable abuse and exploitation detection across more communities, Safer Predict was updated to support Spanish-language recognition and detection. This new feature enables Safer Predict’s text classifier to identify Spanish-language conversations and surface potentially harmful messages for moderation.

SaferHash & SSVH Expansion

Last year, we substantially strengthened Safer’s detection capabilities with a massive expansion of hash data. The import included:

- 1.6 million new SaferHash (image) hashes, bringing our total to 6.3 million

- 50 million new SSVH (video) hashes, bringing our total to 64 million

Maintaining the most current and comprehensive data is essential and ensures our customers have access to robust image and video detection.

Review Tool v 2.9.0

This release makes it easier for reviewers to find, organize, and act on reports. It provides more powerful filtering and sorting, along with configurable report fields for alignment with the CyberTipLine Reporting API. Overall, these updates streamline day-to-day content review work and help moderation teams prioritize high-risk content for accelerated review.

Customer Portal Improvements

An updated UI, with smarter search and navigation, delivered an improved portal experience for customers. We gave customers a single, consolidated hub for their impact metrics, hashlist management, and technical documentation.

Safer’s 2025 Impact

2025 was another impactful year in detection for Safer customers. The adoption and deployment of a text classifier to detect text-based exploitation gave technology companies a wider view to monitor and address child harm. This broader detection horizon creates more opportunities for content moderation and a clearer picture of child safety risks. With Safer Predict, trust and safety teams have a powerful tool to identify potential threats, such as discussions about child sexual abuse material, sextortion threats, and potential grooming of minors.

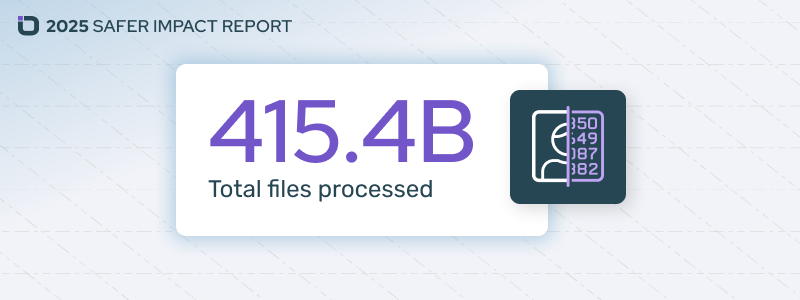

In 2025, Safer processed 415.4 billion files input by our customers. This impressive number was fueled by nearly 30 new Safer customers. Today, the Safer community comprises more than 86 platforms, with millions of users sharing an incredible amount of content daily. This represents a substantial foundation for the important work of preventing repeated and viral sharing of CSAM online.

CSAM detection

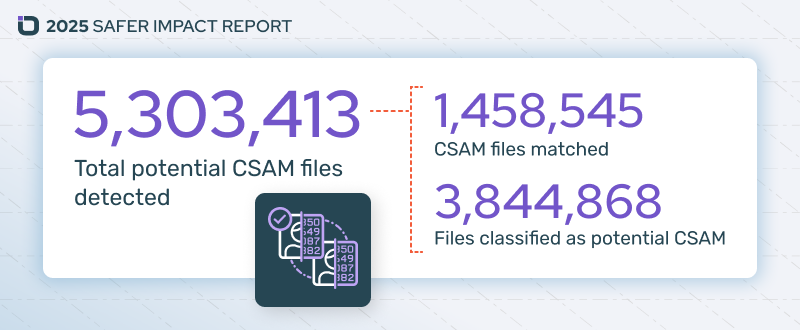

Safer detected nearly 1,500,000 images and videos of known CSAM in 2025. Safer uses multiple hashing methods to detect known CSAM. These hashes are matched against hash lists compiled by various sources, including the National Center for Missing or Exploited Children (NCMEC), which triple-verifies submitted materials. Using hash matching allows Safer to programmatically determine if a file is previously verified CSAM while avoiding unnecessary exposure of content moderators to harmful content.

In addition to detecting known CSAM, our predictive AI detected more than 3,840,000 files of potential novel CSAM. Safer’s image and video classifiers use machine learning to predict whether new content is likely to be CSAM and flag it for further review. Identifying novel CSAM allows it to be reported, verified, and added to hash libraries, accelerating future detection.

Detecting potential child sexual exploitation

Safer launched a text classifier in 2024, and in 2025 alone processed more than 318,590,000 lines of text. This capability offers an important dimension of detection, helping platforms identify sextortion, grooming, and other abuse behaviors happening via text or messaging features. In all, more than 1.3M lines of potential child exploitation were identified, helping content moderators respond to potentially threatening behavior.

Safer’s All-Time Impact

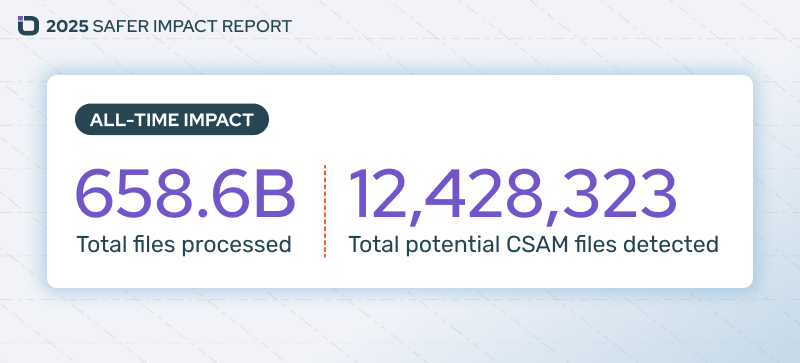

Safer’s impact continues to grow exponentially, with the community almost tripling the all-time total files processed in a single year. Since 2019, Safer has processed 658.6 billion files and 334 million lines of text, resulting in the detection of more than 12.4 million potential CSAM files and nearly 1.4 million instances of potential child exploitation. Every file or line of text processed, and every potential match made, helps shape a safer internet for children and platform users.

Build a Safer internet

Curtailing platform misuse and addressing online sexual harms against children requires an “all-hands” approach. Many trust and safety teams are being asked to do more with fewer and fewer resources. Additionally, some platforms suffer from siloed data and policy gaps that jeopardize effective content moderation. Thorn is here to provide resources and solutions to help trust and safety teams craft effective and cohesive child safety strategies at scale.

Platforms that have organized and aligned their content moderation efforts provide their users with clear policies, their teams with cutting-edge tools, and children the freedom to simply be a kid.